- Daniel Shawul Abdi

- Research Associate (CV)

- Naval Postgraduate School

Department of Applied Mathematics

833 Dyer Road; Building 232, SP-249A

Monterey, California 93943

United States of America

- +1 (831) 656-2293

- +1 (831) 582-7010

- dsabdi@nps.edu

- dshawul@yahoo.com

- Public key

Research

My research interests primarily relate to scientific computation in atmospheric sciences and civil engineering, specifically wind and structural engineering. During my PhD I developed a finite-volume code for the simulation of wind flow over complex terrain. Recently, I have extended the code to use high-order Discontinuous Galerkin methods.

Publications

- Current

- D. Abdi and F.X. Giraldo and Emil M. Constantinescu and Lester E. Carr III and L. Wilcox and T. Warburton, "Acceleration of a Semi-Implicit Non-hydrostatic Unified Model of the Atmospheric (NUMA) on manycore processors," 2016 (To be submitted) [link]

- D. Abdi and L. Wilcox and T. Warburton and F.X. Giraldo, "A GPU accelerated continuous and discontinuous Galerkin non-hydrostatic atmospheric model," 2015 (Submitted) [link]

- 2016

- D. Abdi and F.X. Giraldo, "Efficient Construction of Unified Continuous and Discontinuous Galerkin Formulations for the 3D Euler Equations," Journal of Computational Physics, vol. 320, pp. 46–68, 2016. DOI: 10.1016/j.jcp.2016.05.033.

- D. Abdi and G. Bitsuamlak, "Wind flow simulations in idealized and real built environments with models of various level of complexity," Wind and Structures, vol. 22, no. 4, pp. 503–524, 2016. DOI: 10.12989/was.2016.22.4.503.

- 2015

- D. Abdi and G. Bitsuamlak, "Asynchronous parallelization of a CFD solver," Journal of Computational Engineering, 2015 10.1155/2015/295393.

- 2014

- D. Abdi and G. Bitsuamlak, "Wind flow simulations on idealized and real complex terrain using various turbulence models," Advances in Engineering Software, vol. 75, no. 1, pp. 30–41, 2014. DOI: 10.1016/j.advengsoft.2014.05.002.

- D. Abdi and G. Bitsuamlak, "Numerical evaluation of the effect of multiple roughness changes," Wind and Structures, vol. 19, no. 6, pp. 585–601, 2014. DOI: 10.12989/was.2014.19.6.585.

- 2012

- D. Abdi and G. Bitsuamlak, "Development of computational tools for large scale wind simulations," ATC and SEI Advances in Hurricane Engineering Conference, pp. 1006–1016, 2012. DOI: 10.1061/9780784412626.087.

- D. Abdi and G. Bitsuamlak, "Assessing the effect of boundary conditions on simulating atmospheric boundary layer," 2012, Joint Conference EMI/PMC [link]

- 2010

- D. Abdi and G. Bitsuamlak, "Estimation of surface roughness using CFD," 2010, The Fifth International Symposium on Computational Wind Engineering [link].

- 2009

- D. Abdi and S. Levine and G. Bitsuamlak, "Application of an artificial neural network model for boundary layer wind tunnel profile development," 2009, 11th Americas conference on wind Engineering [link].

Codes

- NebulaSEM - A finite volume and discontinuous Galerkin solver for fluid flow simulations and more.

- NUMA - The Nonhydrostatic Unified Model of the Atmosphere.

- StAnD - A linear static/dynamic structural analysis and design program.

NebulaSEM is quite an intricate program that allows one to solve different types of partial differential equations (PDEs) using the finite-volume method (FVM) and its high-order counterpart the discontiniuous Galerking method (DG-FEM). It is written in high-level object-oriented C++ that allows one to solve PDEs with a few lines of code (e.g. 10 lines for solving the scalar transport equation). Behind the scences, the code is parallelized using MPI, with proper overlapping of computationa and computation, to run on a cluster of computers. This hides the nitty-gritty details of HPC programming from the programer.

void transport() { for (AmrIteration ait; !ait.end(); ait.next()) { /*AMR iteration object (ait) and loop*/ VectorCellField U("U", READWRITE); /*Velocity field defined over the grid*/ ScalarCellField T("T", READWRITE); /*Scalar field*/ ScalarFacetField F = flx(U); /*Compute flux field*/ ScalarCellField mu = 1; /*Diffusion parameter*/ for (Iteration it(ait.get_step()); !it.end(); it.next()) { /*Time loop with support for deferred correction */ ScalarCellMatrix M; /*Matrix for the PDE discretization*/ M = div(T,U,F,&mu) - lap(T,mu); /*Divergence & Laplacian terms*/ addTemporal<1>(M); /*Add temporal derivative*/ Solve(M); /*Solve the matrix */ } } }

NebulaSEM uses self-written Adaptive Mesh Refinement (AMR) library for polyhedral cells that is applied to a solver code using one outer loop. The above code snippet shows how the scalar transport equation is solved with AMR using one line of code. There is no other library that I know of with support for AMR on polyhedral cells. The animations below show the lid-driven cavity problem, benchmark problem for the incompressible Navier-Stokes, solved with AMR using 1-level (left) and 2 or more level (right) refinement.

NebulaSEM has several RANS turbulence models such as the standard k-epsilon, k-omega, RNG k-epsiolon etc. It has also the Smagornisky LES model with which the following animation is produced.

To learn and use the NebulaSEM library quickly, it is best to start from the [github] repository or the Doxygen documentation

I have contributed to the non-hydrostatic unified model of the atmosphere, smartly named as NUMA by my supervisor Francis X. Giraldo. NUMA is a compressible Navier-Stokes solver specifically designed to solve the governing equations of nonhydrostatic atmospheric modeling. NUMA solves the equations in a cube domain as well as on a sphere. NUMA is the dynamical core of the U.S. Navy's NEPTUNE system. NEPTUNE stands for the Navy's Environmental Prediction sysTem Using the NUMA corE. NUMA / NEPTUNE participated in a recent NOAA (National Oceanographic and Atmospheric Administration) study conducted to determine which model will be used operationally by the U.S. National Weather Service

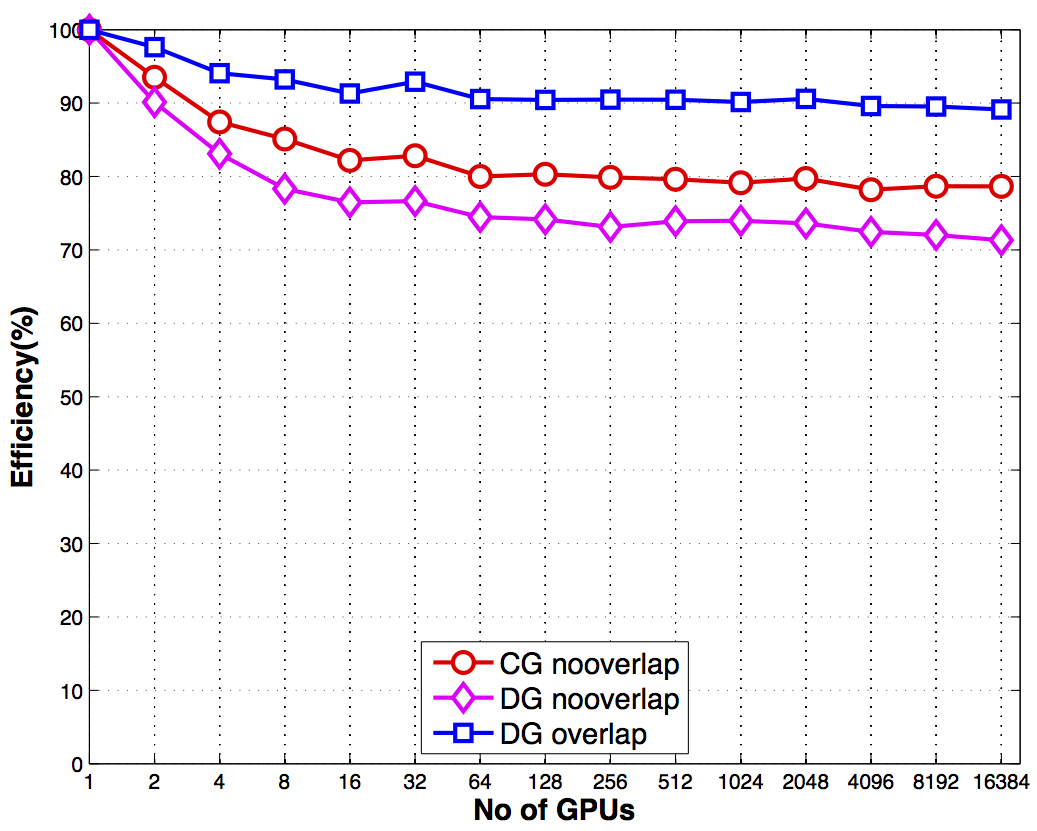

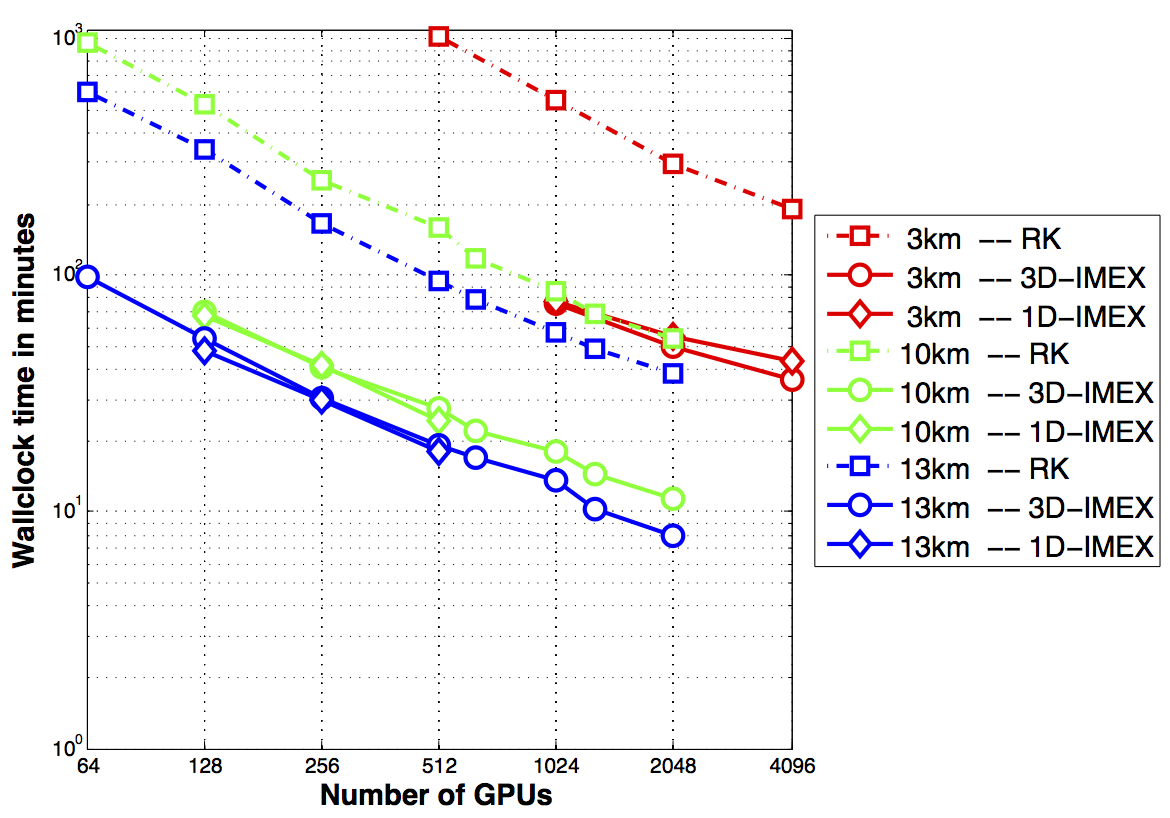

My contributions to NUMA is mainly in the high-performance computing (HPC) department. My main achievement is that I ported NUMA to run on a host of accelerators such as GPUs, Intel's KNL, and even standard CPU cores using a new language called OCCA. NUMA uses the same kernels to run on both GPUs and CPUs because the kernels are compiled at runtime for the specific device. This approach also allows for device-specific optimizations that would not be possible otherwise. I have tested the GPU implementation using upto 16384 GPUs on the second fastest supercomputer in the world, Titan, and found more than 90% scalability. Moreover, a single K20X GPU card was able to deliver approximately a 200X speedup over a single core CPU.

My other contribution was in unifiying several branches of NUMA into one code without degrading of performance. This was originally necessary becasue we needed the discontinuous Galerkin implementation of NUMA for GPUs, but the main branch used the continuous Galerkin method. I have unified the cG and dG versions and even added the capability to do both in the same simulation but different regions of the domain. The dG discretization can be more stable than cG wihout additional stabilization. Here are some of my favourite NWP test cases: on the left is the rising thermal bubble problem, and on the right an acoustic wave traveling around the globe.

Later on, I was able to incorporate the 2D version that solves the shallow water equations into the 3D version of NUMA. This was achived by noticing polynomial order of 0 in z-direction is same as the 2D version. Furthermore, taking advantage of the similarity of the shallow water equations with compressible Euler equations, I reduced the task to just initializing of the test problems. The only major difference between solving compressible Euler and Shallow water equations turned out to be in the way pressure is computed. This allowed as to immediately benefit from the HPC work (multi-CPU and multi-GPU) in NUMA3D for shallow water equations as well.

StAnD, better named than NebulaSEM, is a program for linear structural analysis (static/dynamic) and design of beams, plane and space truss/frames, shells etc. It can also design reinforced concrete / steel beams and columns using different national codes including the Ethiopian building codes and standards.

This is a very old project of mine I started during final year project of my BsC (circa 2003) at Addis Ababa University, Ethiopia. The code uses Microsoft Foundation Classes (MFC) to create a GUI that looks like the widely used SAP program. I was extremely inspired by SAP at the time, and also didn't want to do the structural analysis and design by hand which was required for the final year project. To learn and use the StAnD library quickly, it is best to start from Doxygen documentation provided in the github repository. [github]

Photography

I like to take pictures of beautiful sceneries in Monterey. The city has so many beaches, state parks and other places to enjoy nature. In the past, I used to hike too but the recent wild fire that lasted for months have consumed most of the good spots. Here are some of my photos.

Artificial Intelligence

I spent so much time developing computer programs for different types of games: Chess, Go, Checkers, Reversi. You can read about the dark side of me here!

I have been interested in artificial intelligence for a long time starting circa. 2001. Throughout the years, this has taught me a lot about programming and computer science in general. It was fun to learn, re-invent the wheel, refine heuristics, invent new ones, etc of search and evaluation algorithms. I have written programs for chess, Go, checkers, Reversi, and several other variants. Different types of brute force, selective search, and monte-carlo algorithms are used for the AI part of the game. I have even written programs for games with partial observability (incomplete information) games, multi-player games, and participated in several AI challenges with my bots. This was fun for me to do for a long time, and I still closely follow new developments such as the breakthrough in computer Go with deep convolutional neural networks.